Fun with Neural Networks

Experiments in Machine Learning and Algorithmic Music Composition

A neural network is basically a brain. Well not really. It's a piece of software designed to enable a computer to mimic the kind of pattern-based thought processes used by most organic lifeforms. So, a brain. Except not.

a neural network learning optical character recognition across multiple fonts.

Neural networks work by building a "model" based on lots and lots of examples of a thing. They then start making guesses, and comparing those guesses to the model. Each iteration is refined based on the accuracy of the previous guess. So for example if you were trying to teach a neural network about oranges, you might build a model of lots of different pictures of oranges, orange trees, orange peels, people eating oranges, etc. The first few iterations of the network might spit out something that resembles a fish. Then maybe a football. Then a pear, then eventually apples, peaches, plums, nectarines, and maybe a few oranges. Eventually, if the model is constructed right, the network will only produce oranges. (NOTE: This is a gross oversimplification).

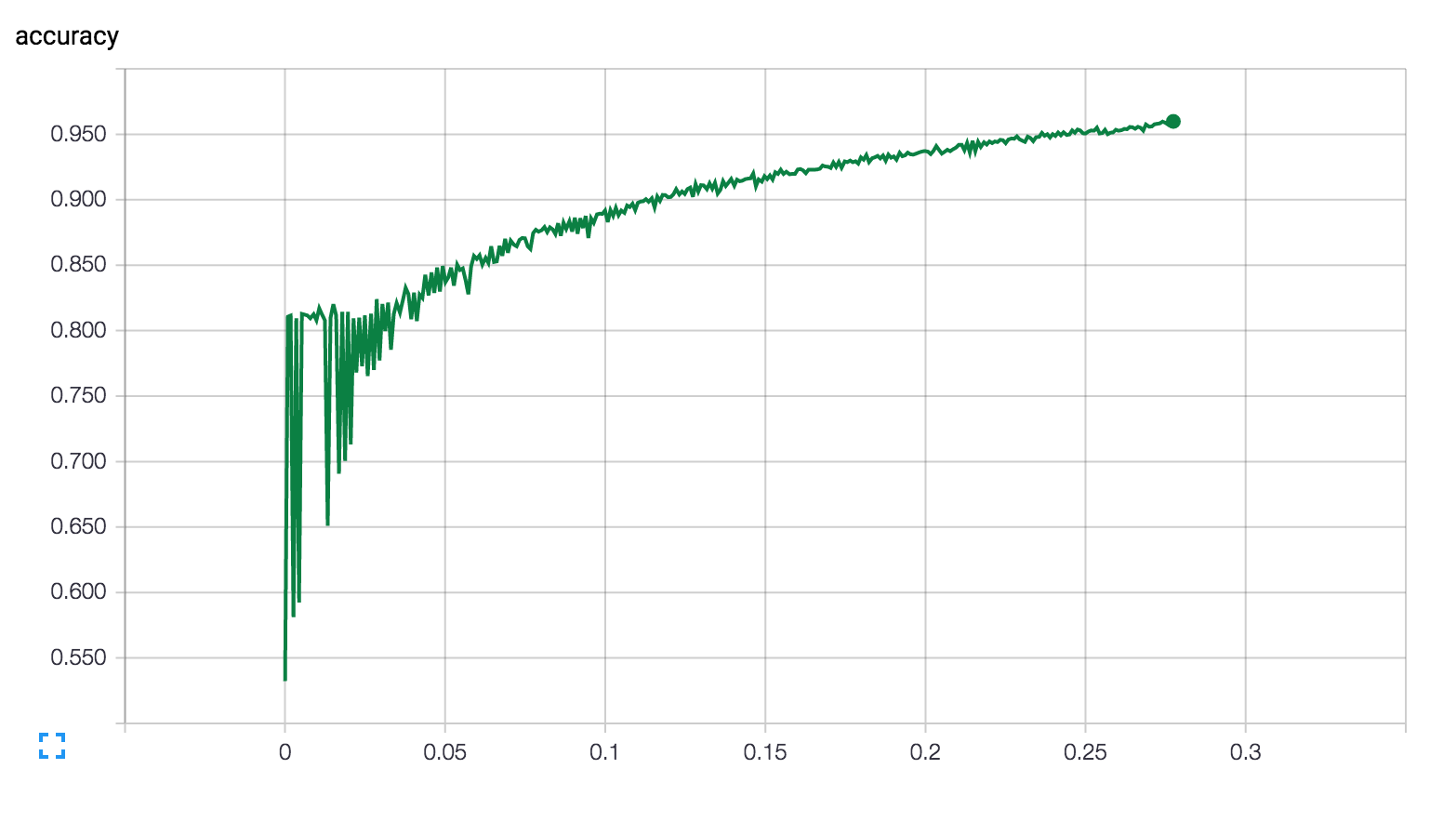

A graphical representation of a neural network "learning" as it checks the accuracy of its output against the model.

Well, not much. Yet. But we can apply the same sort of technology to music, essentially "teaching" a computer what a given genre of music sounds like and then letting it generate new music based on that model. Recently a team at Google released a library called Magenta, which provides a framework for training a neural network to interpret MIDI files.

By using this library, and renting a very powerful server at Amazon, I was able to start feeding my own Recurrent Neural Network. I started by collecting a library of 225 midi files from the Beatles catalog, and fed them all into the network. The results were largely nonsensical:

But IMPORTANTLY, they were results. The network was able to process the inputs, and generate its own output without any manipulation by humans. Based on the initial results I refined the inputs, limiting the source material to 10 Beatles tracks with similar time signatures and keys, and I removed the rhythm section from each track (Note: the network isn't smart enough yet to tell the difference between a "rhythm" note and a "melody" note, so all note values get averaged together - since the patterns for rhythm are typically quite different from melody, this can completely throw off the model. I haven't tried the reverse yet, but there's no reason the network shouldn't be able to handle only rhythmic data). Additionally I fed the network a Gmaj chromatic scale to use as a primer. These results were much more promising:

We can clearly identify this output as "musical", if not maybe explicitly "music". This is progress! At this point I started looking for other midi libraries to use as source material. I wanted to try something less complex than the multi-instrumental tracks from the Beatles, while also paying tribute to the purely digital nature of the project. I found several Daft Punk tracks that seemed appropriate

Now we're getting somewhere! This almost sounds like it could have been written by a human. There are random pauses, and some notes repeat or hold unexpectedly (more on this below), but overall there is clear musical quality to the output.

I don't know! But I have a few ideas. I'm most interested in using this as a tool to further my own songwriting skills. If you talk to data scientists many of them will tell you about artificial intelligence and the singularity, and perhaps mention the similarities between a neural network and a human brain (the name itself is an obvious nod to this, using "neurons" to process input). But like I said, neural networks aren't really like brains at all - at least not in any way we conceive of them. And that's sort of the most fascinating and exciting part for me: no matter how "intelligent" they get, they're never going to "think" like a human. A neural network will always approach pattern recognition and problem solving through a logical process that is governed by rules fundamentally separate and distinct from a human.

What's so exciting about that is the potential for the computer to propose ideas that a human might never think of. So for me, it's less about writing a piece of software that can write a symphony on its own, and more about creating a tool to help living, breathing musicians find new directions to push their art. (one other quick note: there's an easy trap to fall into here in giving a neural network TOO much credit for what it can accomplish. It's designed by humans and will ultimately be subject to all of the same flaws, biases, and limitations as the humans who build it. It's super important to keep this in mind; a neural network is not going to create a new genre of music. Admittedly the stakes in this case are pretty low, but bad things happen when humans pretend algorithms are infallible.)

The most recent run I performed used the same Daft Punk source material, but with a few tweaks for output duration. I pulled out a few "loops" from the output and added some minor effects, but all of the "music" here is still entirely machine generated (the next step is probably adding a beat, perhaps also generated by the RNN):

Up next I'm going to start trying to compose my own inputs for the neural network - I'd love to get to a place where I can "collaborate" with it. I'm also excited to see this platform mature as support for polyphony and MIDI CC is added. I'll update this post periodically with new content, and you can follow along with the Magenta project on Github here. Also feel free to drop me a line if you'd like to discuss or if you want to collaborate!

© Copyright , Adam Salberg. All rights reserved.